Introduction

Every time you type a query into Google and get results in under a second, you’re witnessing something quietly extraordinary. The internet holds over a trillion unique URLs. No search engine scans all of them in real time. Instead, they rely on something built in advance: a search index.

A search index is a massive, structured database that a search engine builds by collecting and organizing information from web pages across the internet. When you search for something, the engine doesn’t browse the web live — it searches this pre-built index, finds the most relevant pages, and returns them almost instantly.

In this guide, you’ll learn exactly what a search index is, how search engines build one, what an inverted index is and why it matters, how indexing connects to your SEO strategy, common indexing mistakes to avoid, and how to check whether your own pages are indexed.

Whether you’re new to SEO, a content creator, or a developer building a search feature, understanding the search index is foundational knowledge.

What Is a Search Index?

A search index is a structured database created by a search engine to store information about web content — including page text, metadata, URLs, and links — so that relevant results can be retrieved almost instantly in response to a user’s query.

Think of it like the index at the back of a textbook. Rather than reading every page to find a topic, you look up the term in the index, which tells you exactly where it appears. Search engines do the same thing, just at a scale of hundreds of billions of web pages.

Without a search index, a search engine would have to scan every page on the internet every time someone searched for something. That would take hours, not milliseconds. The index is the reason you get results in under a second.

Search Index vs. Search Indexing: What’s the Difference?

These two terms are closely related but distinct:

- Search index — the database itself. The structured repository where information about web pages is stored.

- Search indexing — the process of building and updating that database. It’s the ongoing work of crawling pages, analyzing their content, and adding them to the index.

Both the noun and the process are essential — one is the destination, the other is the journey.

How Does a Search Engine Index Work?

To understand the index, you need to understand how search engines build it. The process has three distinct stages: crawling, indexing, and ranking. The index lives at the intersection of the first two.

Stage 1: Crawling

Before anything can be indexed, it must be discovered. Search engines deploy automated programs called web crawlers (also known as web spiders or bots) that continuously browse the internet, following links from one page to another.

Google’s crawlers, for example, start from a list of known URLs and expand outward by following every link they encounter. New content gets discovered this way — which is why internal linking and backlinks matter so much for SEO. If no one links to your page, the crawler may never find it.

Stage 2: Indexing

Once a page is crawled, the search engine analyzes its content. This is the indexing stage. The engine reads:

- The body text and headings

- Meta tags (title, description, alt text)

- Structured data (schema markup)

- Images and videos (to the extent possible)

- Internal and external links

- Page metadata (publish date, language, mobile-friendliness)

It then stores a processed version of this data in the index. Not every crawled page makes it into the index — quality signals, duplicate content detection, and technical barriers (like noindex tags) can prevent inclusion.

Stage 3: Ranking

When a user enters a query, the search engine searches its index for relevant pages and ranks them using hundreds of algorithmic signals — relevance, authority, user experience, and more. This is the SERP (Search Engine Results Page) you see.

The index is what makes ranking fast. Ranking algorithms work on indexed data, not live web pages.

What Is an Inverted Index?

The term “inverted index” sounds technical, but the concept is intuitive. It’s the core data structure that makes search fast.

A Forward Index vs. an Inverted Index

Imagine you have three web pages:

- Page A: “The best running shoes for marathons”

- Page B: “Running shoes for beginners”

- Page C: “Marathon training tips”

A forward index maps documents to the words they contain:

| Document | Words |

|---|---|

| Page A | best, running, shoes, marathons |

| Page B | running, shoes, beginners |

| Page C | marathon, training, tips |

A forward index is slow for search. To find which pages contain “running shoes,” you’d need to scan every single document.

An inverted index flips this around — it maps words to the documents containing them:

| Word | Documents |

|---|---|

| running | Page A, Page B |

| shoes | Page A, Page B |

| marathon | Page A, Page C |

| training | Page C |

| beginners | Page B |

Now when someone searches “running shoes,” the engine looks up those two words in the inverted index and immediately knows which pages contain them — without scanning any page content at all.

This is why Google can search hundreds of billions of pages in milliseconds. The index does the heavy lifting in advance.

What Information Does a Search Index Contain?

A search index isn’t just a list of URLs. It stores rich, layered information about each page, including:

- Full-text content — the processed words and phrases on the page

- Metadata — title tags, meta descriptions, canonical URLs

- Signals — PageRank, link profile, page speed, mobile-friendliness

- Structured data — schema markup that helps the engine understand the page’s context

- Freshness signals — when the page was last modified or crawled

- Duplicate content flags — which page in a cluster is treated as canonical

- Language and locale — for serving the right content to the right audience

Modern search indexes also incorporate semantic understanding — meaning the engine doesn’t just match exact words but understands intent and context. This is partly why searching “best sneakers for long runs” can surface results about marathon running shoes even without exact keyword matches.

How Big Is Google’s Search Index?

Google processes an almost incomprehensible amount of data. As of recent estimates, Google’s index contains hundreds of billions of web pages, stored across thousands of servers distributed across the globe. The index is constantly updated — pages are re-crawled, re-indexed, and re-ranked on an ongoing basis.

This scale is why Google’s indexing infrastructure is one of the most complex engineering feats in history. Even with this scale, not every page on the web is indexed — Google selectively chooses which pages to add based on quality, relevance, and crawl budget.

Why Does the Search Index Matter for SEO?

Here’s the fundamental truth of SEO: if your page isn’t in the index, it doesn’t exist for search engines. It cannot rank. It cannot drive organic traffic. Full stop.

Indexing is the prerequisite for everything else in SEO. You can write the best content in the world, earn high-quality backlinks, and optimize every technical element — but if your page is blocked from indexing, none of it matters.

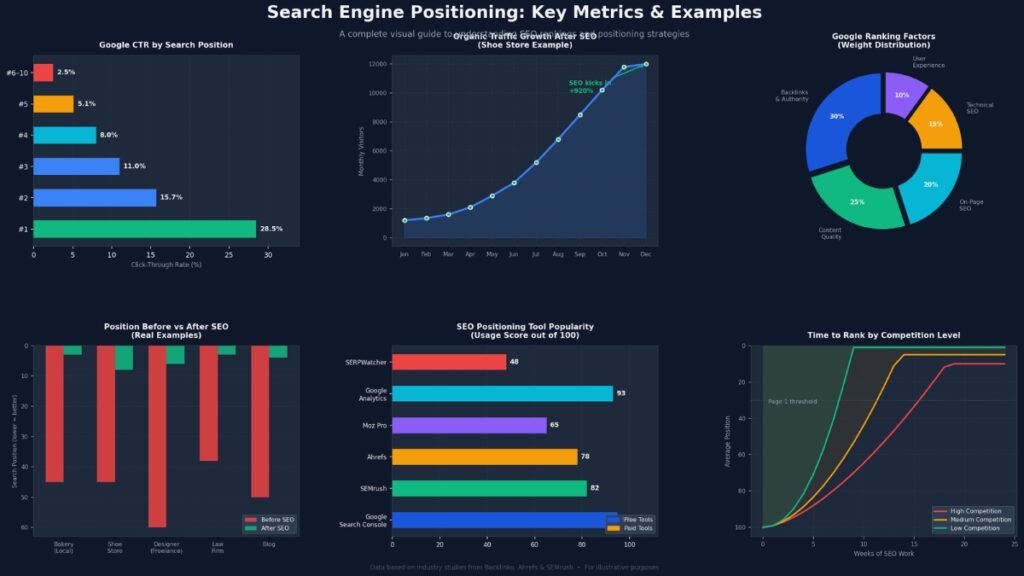

How Indexing Affects Rankings

Being indexed doesn’t guarantee a ranking, but it’s the necessary first step. After indexing, ranking is determined by:

- Relevance — how closely the page content matches the query

- Authority — the quality and quantity of backlinks pointing to the page

- Experience signals — page speed, mobile-friendliness, Core Web Vitals

- Content quality — depth, accuracy, freshness, and E-E-A-T signals

The index is the stage; ranking is the performance. You can’t perform without a stage.

How to Get Your Pages Indexed

There are several reliable methods to help search engines discover and index your pages:

1. Submit an XML Sitemap

An XML sitemap is a file that lists all the important URLs on your website. Submitting it to Google Search Console (and Bing Webmaster Tools) signals to crawlers which pages exist and how often they’re updated. This is one of the fastest ways to get new content indexed.

2. Build Internal Links

Crawlers navigate your site by following links. Every page on your site should be reachable through at least one internal link from another indexed page. Orphan pages — pages with no internal links pointing to them — are at serious risk of never being crawled.

3. Earn Backlinks

When authoritative external sites link to your content, crawlers follow those links and discover your page. High-quality backlinks don’t just improve rankings — they accelerate indexing.

4. Use Google Search Console’s URL Inspection Tool

For newly published or updated pages, you can request indexing directly through Google Search Console’s URL Inspection tool. Enter the URL, check its current status, and click “Request Indexing.” This doesn’t guarantee immediate indexing but notifies Google that the page is ready.

5. Ensure Crawlability

Make sure your robots.txt file isn’t accidentally blocking crawlers from important sections of your site. Also verify that pages you want indexed don’t have a noindex meta tag applied.

What Prevents Pages from Being Indexed?

Understanding common indexing barriers is just as important as knowing how to encourage indexing.

| Barrier | Cause | Fix |

|---|---|---|

noindex tag | Added to page’s <head> | Remove the tag from pages you want indexed |

robots.txt block | Disallow rule in robots.txt | Update rules to allow crawling |

| Thin or duplicate content | Page has little original value | Improve content quality or use canonical tags |

| Crawl budget exhaustion | Large sites with many low-value pages | Prioritize important pages; reduce crawl waste |

| Low PageRank / no links | No internal or external links | Add links to the page |

| JavaScript rendering issues | Content only loads via JS | Server-side render or pre-render critical content |

| Password protection | Page requires login | Ensure key pages are publicly accessible |

| Slow page speed | Crawlers may deprioritize slow pages | Improve Core Web Vitals |

How to Check If Your Page Is Indexed

There are three main ways to check indexing status:

Method 1: Google Search Console (most reliable) Open the URL Inspection tool, paste your URL, and Google will tell you whether it’s indexed, the last crawl date, any issues found, and whether it’s eligible to appear in search results.

Method 2: The site: Operator Type site:yourdomain.com into Google. This returns a list of pages from your domain currently in Google’s index. Note that this isn’t exhaustive — large sites may show incomplete results — but it’s a quick sanity check.

Method 3: Direct Search Search for unique phrases from your page. If the page appears, it’s indexed. If it doesn’t, it may not be.

Search Indexing in Non-Google Contexts

Search indexes aren’t exclusive to Google or Bing. The same principles apply to:

- E-commerce sites — Amazon’s product search uses an internal index to return results when you search “wireless headphones.” Without indexing, search across millions of products would be impossibly slow.

- Site search — Many websites use internal search tools (like Algolia or AddSearch) that build their own indexes of site content.

- Enterprise search — Companies use search indexes to let employees search internal documents, CRMs, and databases instantly.

- App search — Mobile apps index their data so in-app search feels instant.

The underlying principles — collect, structure, retrieve — are universal. Google’s scale is just dramatically larger.

Pro Tips: Maximizing Your Indexing Efficiency

Keep your sitemap current. Remove deleted pages and add new ones promptly. An outdated sitemap wastes crawl budget.

Consolidate duplicate content. Use canonical tags to tell search engines which version of similar pages should be indexed. Duplicate content dilutes your crawl budget and confuses the indexer.

Prioritize crawl budget for large sites. If your site has thousands of pages, low-quality pages (thin content, parameter URLs, pagination) can eat crawl budget that should be spent on important content. Use noindex selectively on truly valueless pages.

Monitor index coverage reports. Google Search Console’s Index Coverage report shows exactly which pages are indexed, which have errors, and which are excluded — and why. Check it regularly.

Structured data helps the index understand context. Schema markup doesn’t directly cause indexing but helps Google understand what your content is about, which can improve how it’s represented in search results.

Common Mistakes That Hurt Indexing

Accidentally blocking crawlers

Accidentally adding Disallow: / to your robots.txt or deploying a noindex tag site-wide (a common staging server mistake) can de-index your entire site. Always double-check these settings after migrations or CMS updates.

Over-indexing low-value pages

More isn’t always better. Indexing thousands of thin pages (auto-generated tags, search result pages, parameter-based duplicates) dilutes crawl budget and signals lower overall site quality. Be selective.

Ignoring JavaScript rendering

Content rendered exclusively via JavaScript may not be crawled or indexed properly, since crawlers may not execute JavaScript the same way a browser does. Critical content should be accessible in the HTML source.

Publishing without internal links

A new blog post with no internal links is an island. Crawlers can only reach it if they already know it exists. Every new piece of content should be linked to from at least one relevant existing page.

Neglecting mobile indexing

Google primarily uses the mobile version of your content for indexing (this is called mobile-first indexing). If your mobile pages are missing content present on your desktop version, your indexing and rankings will suffer.

Also read: search engine basics.

FAQs: What Is a Search Index?

What is a search index in simple terms?

A search index is a structured database that a search engine builds by analyzing web pages. It stores key information about each page text, keywords, links, and metadata so that relevant results can be retrieved almost instantly when someone performs a search. Think of it as a giant, organized catalog of the internet.

What is the difference between crawling and indexing?

Crawling is the discovery phase automated bots browse the web, following links to find pages. Indexing is the analysis and storage phase, the engine reads those pages, processes their content, and stores the data in its index database. A page must be crawled before it can be indexed, but not every crawled page gets indexed.

What is an inverted index in a search engine?

An inverted index is the data structure most search engines use to enable fast retrieval. Instead of mapping documents to the words they contain (forward index), it maps each word to all the documents containing it. This allows the engine to find all relevant pages for a query instantly, without scanning page content in real time.

Why is my page not showing up in Google?

Several issues can prevent indexing: a noindex meta tag, a robots.txt block, thin or duplicate content, no internal or external links pointing to the page, or JavaScript rendering issues. Use Google Search Console’s URL Inspection tool to diagnose the specific problem.

How long does it take for a page to get indexed by Google?

It varies widely from a few hours for high-authority sites with active crawl budgets, to several weeks for new or low-authority sites. You can speed up the process by submitting your URL through Google Search Console, building internal links to the page, and sharing it to attract backlinks.

Does every page on my website get indexed?

Not automatically, and not always. Google selectively indexes pages based on quality signals, crawl budget, and technical accessibility. Low-quality pages, duplicates, or pages blocked by technical directives may not be indexed. It’s best practice to review your index coverage regularly in Google Search Console.

What’s the difference between a search index and a search engine?

A search engine is the complete system crawling, indexing, and ranking that allows users to find information online. The search index is one specific component of that system: the database where analyzed page data is stored and retrieved from. The index is the core infrastructure that makes a search engine fast.

Conclusion

A search index is the backbone of every search engine. Without it, searching the internet in real time would be impossibly slow. It’s the structured database built from crawling and analyzing billions of web pages, enabling near-instant retrieval of relevant results.

Here’s what to remember:

- A search index is a pre-built database; search engines query it, not the live web.

- Indexing happens in three stages: crawling, indexing, and ranking.

- The inverted index data structure is what makes search lightning-fast.

- For SEO, indexing is the prerequisite to ranking — if your page isn’t indexed, it can’t appear in search results.

- Use XML sitemaps, internal links, and Google Search Console to manage and monitor your indexing status.

- Avoid common mistakes: accidental

noindextags, blocking crawlers, over-indexing thin pages, and neglecting JavaScript rendering.

Understanding the search index isn’t just academic knowledge it directly shapes how you build, structure, and optimize your content for discoverability.

For more information visit site: searchenginebasics.net